Hi Folks!

We’ll try to do a bi-weekly installement of Addons for Empathy (until we run out of addons). This one is a two-parter: Our main installment is about working with proxies in Blender, the second is about a bold new experiment in Rig UI.

Proxy Workflow and Transparent Proxies Addons:

Get the files from my gitorious

The video is about two addons, both making proxy editing in the sequencer more friendly to our project. A quick explanation:

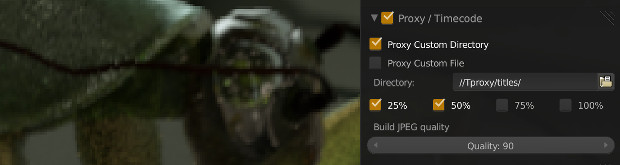

Blender’s Video Sequence Editor or VSE for short has a feature called proxies. This basically allows an in-place replacement of strips by 25%, 50%, 75% or 100% versions, in a fast format (.jpg or motion jpg) This is especially useful when:

- Editing Large format files that are too slow to be realtime – either in resolution (2K or 4K) or in type (.EXR!!!)

- Editing over the network, especially files of the previous types

- Working with complex and multiple effects that could be benefit from being cached

So Proxies in Blender work a bit like a combination of proxies and caches. I prefer them as the former, since it skips having to recalculate every single you change some timing – instead they only need to be recalculated when the sources change.

However, working with proxies in Blender can be painful by default, and this is where Proxy Workflow Addon comes in:

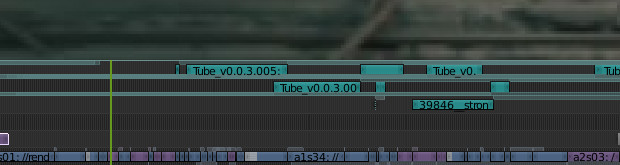

- Editing Proxy settings must be done strip by strip: Proxy Workflow lets you set them for all selected strips at once

- Default location is in the same folder as the originals, which is bad in the case of network shares; Proxy Workflow automatically sets them to a local directory “TProxy” that contains all the proxies for the edit, and can be moved around like a scratch disk

- Sometimes Blender tries looking for the original files even when it is using proxies. If you are trying to use proxies to avoid using the network/internet, this becomes a problem. Proxy workflow allows ‘Offlining’ strips, and then ‘Onlining’ them again when you can reconnect to the network

- Blender doesn’t know when the source files are ‘stale’ and need to be re-proxied – for instance if you rerender. Proxy workflow timestamps as it makes proxies, allowing you to select a bunch of strips and re-proxify only the changed ones.

- Proxy workflow is designed to work with movies and image strips only for now, as I’m interested in true proxies, not caching effects.

A seperate addon is called ‘Transparent Proxies’ and does what it says on the tin (and no more): It allows making proxies of image sequences that preserve the alpha channel for alpha over effects. It does this by cheating: It uses Imagemagick on the commandline to make a .tga proxy, and just renames to .jpg to satisfy Blender. You need to install imagemagick first for it to work.

Bonus: Rig UI Experiment:

Code is at gitorious

This brings us to the bonus round- the Rig Selection UI. I’m continuing my round of experimentation with BGL and modal addons, to make the kind of ‘typical’ rig ui where animators can select or act on a rig by clicking on an image. This ui is using an SVG file to define the hotspots, and a PNG to actually draw the image. It already works, though I’m still going to refine it and add more options/ easier rig customizability. The end goal is to be able to do Rig UIs without writing code, simply by drawing them in Inkscape and pressing a few buttons in Blender. Stay tuned!!!